Introduction

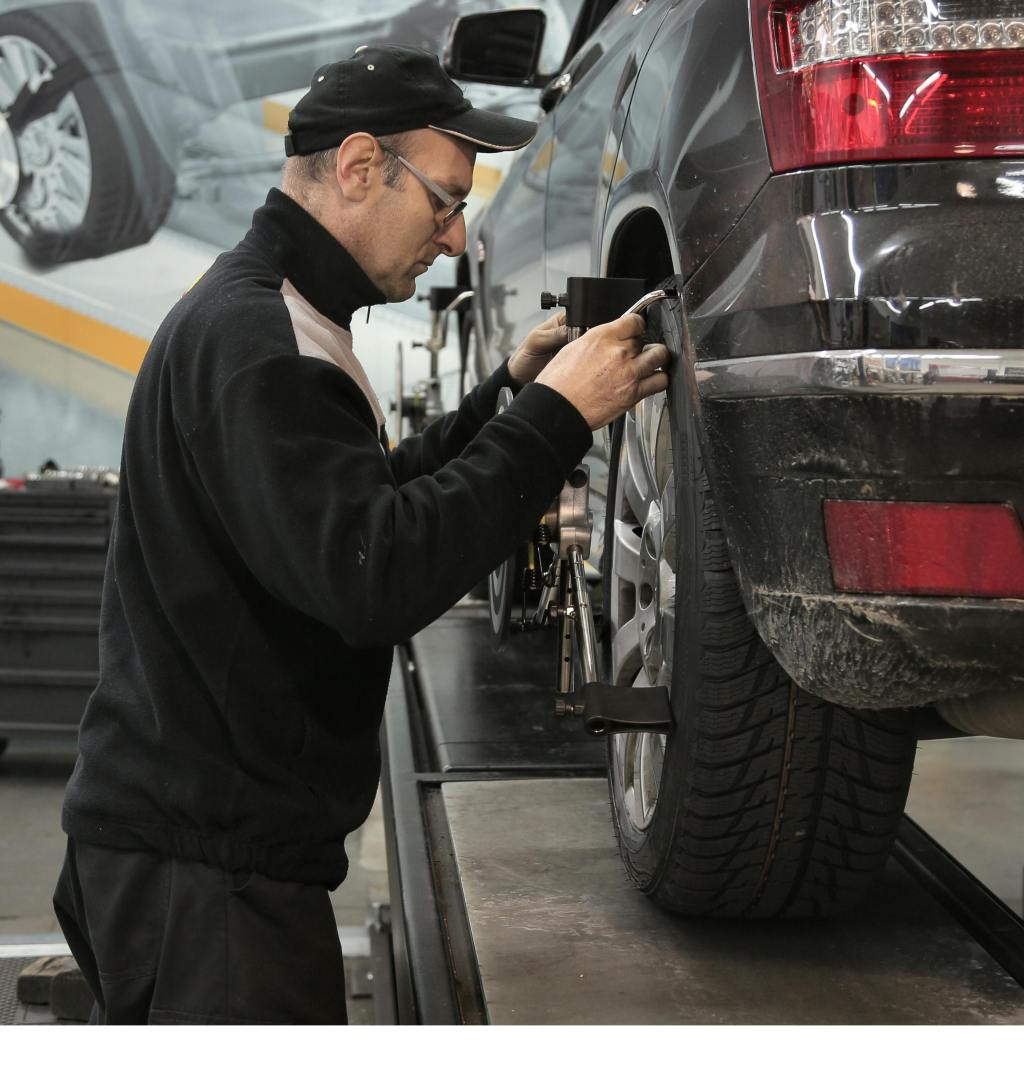

During routine maintenance visits, drivers are sometimes told their vehicle may need a wheel alignment. Because alignment affects both tire wear and vehicle handling, dealerships often recommend checking it during inspections.

However, many drivers wonder when an alignment is actually necessary.

What a Wheel Alignment Does

A wheel alignment adjusts the angles of the wheels so they match the manufacturer’s specifications.

Proper alignment helps ensure:

• even tire wear

• stable steering

• proper vehicle handling

• improved fuel efficiency

When wheels fall out of alignment, the vehicle may not drive straight or tires may wear unevenly.

Why Dealerships Recommend Alignments

Dealerships may suggest a wheel alignment if technicians notice signs of uneven tire wear or steering irregularities during an inspection.

Alignments may also be recommended after:

• hitting potholes

• replacing suspension components

• installing new tires

Signs Your Vehicle May Need an Alignment

Drivers may notice symptoms such as:

• the car pulling to one side

• uneven tire wear

• steering wheel not centered

• vibration while driving

These signs may indicate the vehicle’s alignment should be checked.

The Bottom Line

Wheel alignments help maintain proper tire wear and vehicle handling. While dealerships may recommend the service during inspections, drivers can look for signs of misalignment before scheduling the service.

Internal Links

You may also want to read about tire rotations and tire balancing, since these services are often recommended together during dealership tire inspections.

About the Author

Dealer Truth articles are written by an automotive industry observer focused on helping drivers understand dealership service recommendations and maintenance practices.

Leave a comment